Architecture of Kubernetes & OpenShift

Kubernetes

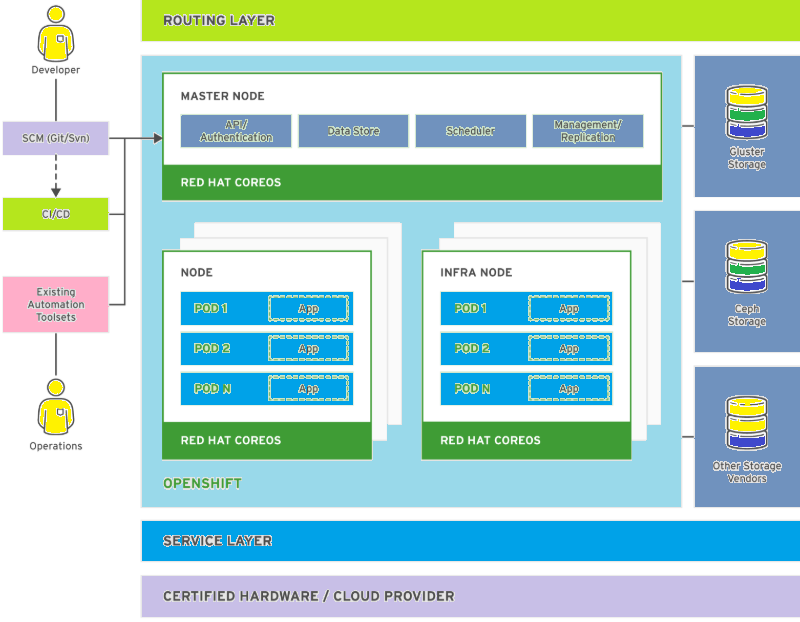

Kubernetes is an opensource orchestration platform which simplifies the deployment, management, and scaling of containerized applications. Kubernetes uses several nodes to ensure the resiliency and scalability of its managed applications, that is he main advantage of using Kubernetes. Kubernetes forms a cluster of node servers that run containers and are centrally managed by a set of master servers. A server can act as both a server and a node, but those roles are usually segregated for increased stability. Kubernetes works on declarative nature.

Kubernetes Terminology:

Node: A server that hosts applications in a Kubernetes cluster.

Master Node: A node server which manages the control plane in a Kubernetes cluster. Master nodes provide basic cluster services such as APIs or controllers.

Worker Node: It is also know as Compute Node, worker nodes execute workloads for the cluster. Application pods are scheduled on worker nodes.

Resource: Resources are any kind of components definition managed by Kubernetes. Which contains the configuration of the managed component (i.e: role assigned to a node), and the current state of the component (i.e: node is available or not).

Controller: Controller is a Kubernetes process that watches resources and makes changes attempting to move the current state towards the desired state.

Label: It is a key-value pair that can be assigned to any Kubernetes resource. Selectors use labels to filter eligible resources for scheduling and other operations.

Namespace: Namespace defines the scope for Kubernetes resources and processes, so that resources with the same name can be used in different boundaries.

Operators: Operators are Kubernetes plug-in components that can react to cluster events and control the state of resources.

Kubernetes Resources

Kubernetes has six main resource types that can be created and configured using a YAML or a JSON file.

Pods(Po)

Pod is basic unit of work for Kubernetes. It represent a collection of containers that share resources, such as IP addresses and persistent storage volumes.

Services (SVC)

It define a single IP/port combination that provides access to a pool of pods. By default, services connect clients to pods in a round-robin fashion.

Replication Controllers (RC)

It is a Kubernetes resource which defines how pods are replicated (horizontally scaled) into different nodes. Replication controllers are a basic Kubernetes service to provide high availability for pods and containers.

Persistent Volume (PV)

It defines storage area to be used by Kubernetes pods.

Persistent Volume Claim (PVC)

It represents a request for storage by a pod. PVCs links a PV to a pod so its containers can make use of it, usually by mounting the storage into the container’s file system.

Config Maps (CM) and Secrets

It contains a set of keys and values that can be used by other resources. ConfigMaps and Secrets are usually used to centralize configuration values used by several resources. Secrets differ from ConfigMaps maps in that Secrets’ values are always encoded (not encrypted) and their access is restricted to fewer authorized users.

Although Kubernetes pods can be created standalone, they are usually created by high-level resources such as replication controllers.

OpenShift

Red Hat OpenShift Container Platform is a set of modular components and services built on top of Red Hat CoreOS and Kubernetes. RHOCP adds PaaS (Platform As A Service) capabilities such as remote management, increased security, monitoring and auditing, application life-cycle management, and self-service interfaces for developers.

OpenShift Terminology

Infra Node: A node server containing of infrastructure services like monitoring, logging, or external routing.

Console: A web interface provided by the RHOCP cluster that allows developers and administrators to interact with cluster resources.

Project: It is a OpenShift’s extension of Kubernetes’ namespaces. Allows the definition of User Access Control (UAC) to resources.

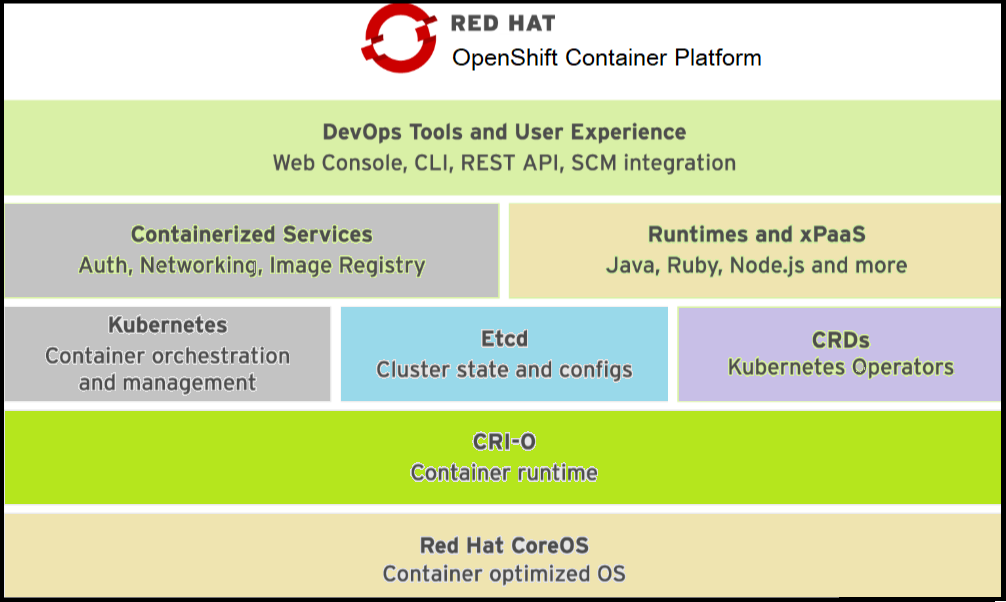

In above picture from bottom to top, and from left to right, this shows the basic container infrastructure, integrated and enhanced by OpenShift.

Red Hat Core OS: Openshift Cluster uses Red Hat Core OS as base OS. Red Hat CoreOS is a Linux distribution focused on providing an immutable operating system for container execution.

CRI-O: CRI-O is an implementation of the Kubernetes CRI (Container Runtime Interface) to enable using OCI (Open Container Initiative) compatible runtimes. CRI-O can use any container runtime that satisfies CRI: runc (used by the Docker service), libpod (used by Podman) or rkt (from CoreOS).

Kubernetes Container Orchestration and Management: Kubernetes manages a cluster of physical or virtual hosts, that run containers. It uses resources that describe multicontainer applications composed of multiple resources, and how they interconnects.

Etcd: It is a distributed key-value store, used by Kubernetes to store configuration and state information about the containers and other resources inside the Kubernetes cluster.

Custom Resource Definitions(CRD): CRDs are resource type stored in Etcd and managed by Kubernetes. These resource types form the state and configuration of all resources managed by OpenShift.

Containerized Services: These services full fills many PaaS Infrastructure functions, such authorization and Networking. OpenShift uses the basic container infrastructure from Kubernetes and the underlying container runtime for most internal functions. That is, most OpenShift internal services run as containers orchestrated by Kubernetes.

Runtimes and xPaaS: Runtimes and xPaaS are base container images ready for use by developers, each image preconfigured with a particular runtime language or database. The xPaaS offering is a set of base images for Red Hat middleware products such as JBoss EAP and ActiveMQ. Red Hat OpenShift Application Runtimes (RHOAR) are a set of runtimes which are optimized for cloud native applications in OpenShift. The application runtimes available are Red Hat JBoss EAP, OpenJDK, Thorntail, Eclipse Vert.x, Spring Boot, and Node.js.

DevOps tools and user experience: Openshift provides web UI and CLI management tools for managing user applications and OpenShift services. The OpenShift web UI and CLI tools are built from REST APIs which can be used by external tools such as IDEs and CI platforms.

Latest Features of OpenShift 4

OpenShift 4 did a massive changes from previous versions. Keeping backwards compatibility with previous releases, it includes new features, such as:

- CoreOS as the mandatory operating system for all nodes, offering an immutable infrastructure optimized for containers.

- Cluster installer which guides the process of installation and update.

- It provides a self-managing platform, which can automatically apply cluster updates and recoveries without disruption.

- Improved form of application life-cycle management.

- It provides an Operator SDK to build, test, and package Operators.

OpenShift Resource Types

Main resource types added by OpenShift Container Platform to Kubernetes are as follows:

Deployment Config (DC)

It represents the set of containers included in a pod, and the deployment strategies to be used. A dc also provides a basic workflow of continuous delivery.

Build Config (BC)

BC works together with a DC to provide a basic but extensible continuous integration and continuous delivery workflows. It Defines a process to be executed in the OpenShift project. Used by the OpenShift Source-to-Image (S2I) feature to build a container image from application source code stored in a Git repository.

Routes

It represents a DNS host name recognized by the OpenShift router as an ingress point for applications and microservices.

To get a list of all the resources available in a OpenShift cluster and their abbreviations, use the oc api-resources or kubectl api-resources commands.

Although Kubernetes replication controllers can be created standalone in OpenShift, they are usually created by higher-level resources such as deployment controllers.

Networking

Kubernetes provides a software-defined network (SDN) that creates the internal container networks from multiple nodes and allows containers from any pod, inside any host, to access pods from other hosts. Access to the SDN only works from inside the same Kubernetes cluster.

Each container deployed in a Kubernetes cluster has an IP address assigned from an internal network that is accessible only from the node running the container. Because of the container’s ephemeral nature, IP addresses are constantly assigned and released. Containers inside Kubernetes pods should not connect to each other’s dynamic IP address directly. Services resolves this problem by linking more stable IP addresses from the SDN to the pods. If pods are restarted, replicated, or rescheduled to different nodes, services are updated, providing scalability and fault tolerance.

To access containers from outside is more complicated. Kubernetes services can specify a NodePort attribute, which is a network port redirected by all the cluster nodes to the SDN. Then, the containers in the node can redirect a port to the node’s port. But unfortunately, none of these approaches are efficient for smooth scaling of application.

OpenShift makes external access to containers both scalable and simpler by defining a route resources. A route defines external-facing DNS names and ports for a service. A router (ingress controller) forwards HTTP and TLS requests to the service addresses inside the Kubernetes SDN. The only requirement is that the desired DNS names are mapped to the IP addresses of the OpenShift router nodes.

You can read more basic about Containers in my another post Container Architecture.

I hope you like this post, if you have any questions? please leave comment below!

Thanks for reading. If you like this post probably you might like my next ones, so please support me by subscribing my blog.

Good job 👌

Thanks Rajan Dinkar, will come with more topics related to Linux, DevOps, Networking & IOT, please subscribe our blog to get each and every update.

Its a great place to learn new technologies. where we can get all in one article. Really awesome by ROHIT

Thanks Dev Arch, please subscribe our blog to get all updates on it.

Pingback: How To Implement RBAC in OpenShift ? — OnionLinux

Pingback: How to Deploy an Application to an OpenShift Cluster — OnionLinux

Pingback: OpenStack Architecture — OnionLinux

Pingback: OpenShift Pod Scheduling Algorithms — OnionLinux

Pingback: How to setup Kubernetes Cluster in local — OnionLinux