Container Technology : Introduction

Introduction to Container Technology

Container Technology is a method of packaging of application so it can run with isolated dependencies, and It has fundamentally altered the development of software in modern days due to their compartmentalization of a computer system. let’s know about the history of this technology.

Container technology was borned in 1979 with Unix and the chroot . chroot system isolates a process by restricting access of an application to a specific directory, whereas this directory comprised of a root and child directories. This was the first system with an isolated process, and very soon it was adopted and added into BSD OS in 1982; however, unfortunately container technology didn’t progressed over the next two decades and remain dormant.

Software applications typically depend on libraries, configuration files, or services that are provided by the runtime environment. In traditional runtime environment for a software application is a physical host or a virtual machine, and application dependencies are installed as part of the that host.

For Example, consider a Java application that requires access to a common shared library that implements the FTP protocol. Traditionally, a system admin installs the required package that provides the shared library before installing the application.

The major drawback to traditionally deployed software application is that the application’s dependencies are entangled with the runtime environment. An application may break when any updates or patches are being applied to the base operating system (OS).

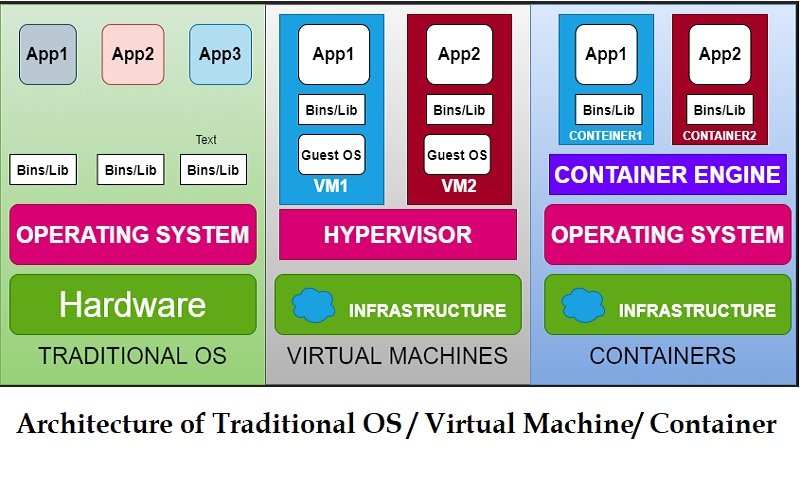

You can see difference among Traditional OS/ VM & Containers in below picture.

A software application can be deployed using a container. Container is a set of one or more set of processes that are isolated from the system. Containers provide many benefits same as virtual machines, such as security, storage, and network isolation. Containers require very fewer hardware resources and are very quick to start and terminate. Containers also isolate the runtime resources (such as CPU and storage) and libraries for an application to minimize the impact of any OS update to the host OS.

The Open Container Initiative provides a set of industry standards that define a container runtime specifications and a container image specifications. The image specifications define the metadata and format for the bundle of files that form a container image. When we build an application as a container image, it complies with the OCI ( Open Container Initiative) standard, we can use any OCI-compliant container engine to execute the application. because of these features application portability is very easy using containers.

There are many container engines available to manage and execute individual containers, including Rocket, Drawbridge, LXC, Docker, and Podman.

Advantages of using Containers

Environment Isolation

Containers works in a isolated environment where changes made on the host OS or other applications doesn’t affect the containers. Because libraries needed by a container are self-contained, so application can run without disruption. For example, each application/service can exist in its own container with its own set of libraries. Any update made to one of container doesn’t affects other containers.

Low Hardware Requirement

Containers uses OS internal features to create an isolated environment where all resources are managed by using OS facilities such as namespaces and cgroups. This approach minimizes the amount of memory and CPU as compared to a virtual machine hypervisor. Launch an application in a VM is a way to create isolation from the running environment, but it requires a heavy layer of services to support, so it consumes more memory and CPU as compare to containers.

Quick deployment

Containers can be deployed quickly because it doesn’t requires to install the entire underlying operating system for it. Normally, to support the isolation, a new OS installation is required on a physical host or VM, and a simple update may require full OS restart. but a container restart does not require stopping any services on the host OS or VM.

Reusability of Containers

We can reuse same container without setting up a full OS. For example, the same database container image that provides a production database service can be used by each developer for database during application development. Using containers, there is no need to maintain production and development database servers separately. A single container image can be use to create instances of the database service.

Smooth Deployment in Multiple Environments

Using containers, all application dependencies and environment settings are encapsulated in container image. so there is no chance to break application even if we changes the environment. whereas In a traditional deployment scenario, any environment differences could break the application.

Containers are an ideal approach when using Microservices for application development. Each service should be encapsulated in a lightweight and reliable container environment that can be deployed to a production or development environment. The collection of containerized services required by an application can be hosted on a single machine, there is no need to manage a machine for each service.

Many applications are not well suited for a containerized environment. For example, applications accessing low-level hardware information, such as file systems, memory and devices may be unreliable due to container limitations.

You may like also: Ansible: Infrastructure As A Code(IAAC)

I hope you like this post “Container Technology”, if you have any questions? Leave your comments below!

Thanks for reading. If you like this post probably you might like my next ones, so please support me by subscribing my blog.

Pingback: Container Architecture - onionlinux.com

Pingback: Building Container Image using Dockerfile — OnionLinux